Regulators across the world are finally getting serious about the software supply chain. India’s CERT-In SBOM Technical Guidelines (v2.0, July 2025) go beyond just SBOMs they extend to a broader BOM ecosystem, including CBOM, QBOM, AIBOM, and HBOM. This makes the requirement not just about software components, but about understanding the full composition of modern systems.

Globally, the direction is the same whether it’s the US Executive Order 14028, the EU Cyber Resilience Act, RBI Advisory 11/2024, or MeitY’s 2025 guidelines.

The message is clear: you must know exactly what you are delivering and consuming and be able to prove it.

So, the organization reacted.

They asked vendors for SBOMs.

Vendors generated and shared them.

Procurement teams stored them but often lacked the tools to actually read or interpret them.

But one critical question is still being overlooked: Is the SBOM you received actually compliant and usable?

Not at runtime.

Not in production.

But at the moment it is delivered and every time it changes.

Because here’s the missing piece: Every time your software is updated, your BOM changes too.

New components are added. Versions shift. Dependencies evolve.

But most organizations have no clear way to:

- track what changed

- understand where it changed (component, dependency path)

- and verify whether those changes remain compliant with guidelines

That’s the gap we set out to close.

What “compliant” actually means

An SBOM is not compliant simply because it exists. Not because it’s in CycloneDX or SPDX format. Not because it lists hundreds of components.

Compliance is not about format or volume. It’s about completeness.

CERT-In’s guidelines define 21 mandatory data fields that every compliant SBOM must include. These are not recommendations; they are the minimum baseline required for an SBOM to be useful for security, risk management, and regulatory purposes.

These 21 fields span five critical categories:

Identity — what the component is and where it came from

Name, version, description, supplier, license, origin, and dependencies

Security — what risks it carries

Known vulnerabilities (CVEs), patch status, and cryptographic checksums

Lifecycle — how old it is and how critical it is

Release date, end-of-life date, criticality rating, and usage restrictions

Technical — how it is structured

Executable property, archive property, structured format descriptor, and unique identifiers (PURL or CPE)

Metadata — who created the SBOM and when

Author, timestamp, and comments

When we tested SBOMs generated by widely used tools against these 21 fields, the results were sobering.

Even SBOMs produced by industry standard tools like the CycloneDX Maven Plugin complete with hundreds of components, accurate dependency graphs, and valid formats scored around 33% compliance with CERT-In requirements.

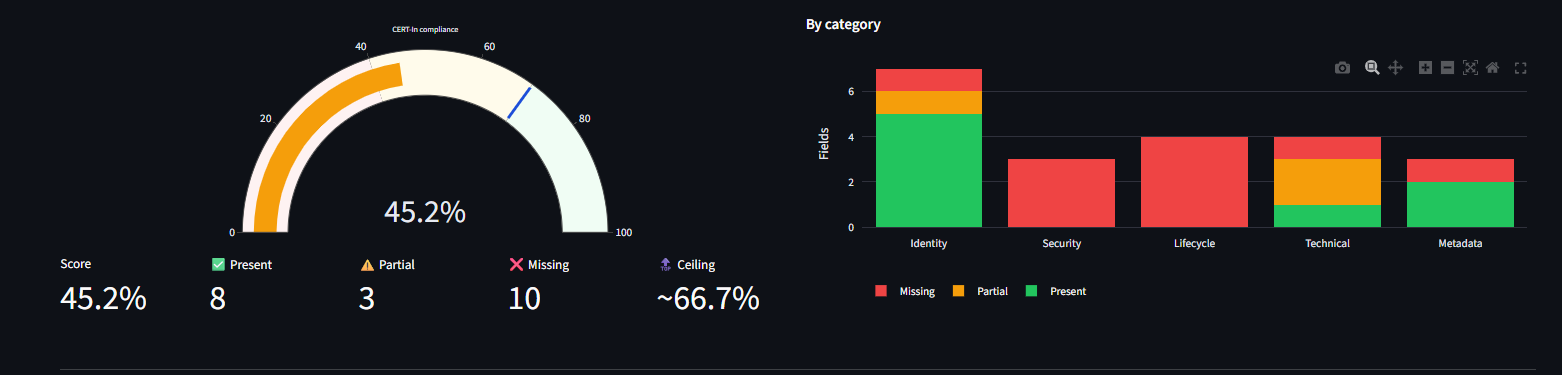

Typical % passing score against CERT-In field coverage—overall score vs. automation ceiling and status by category (example SBOM).

Not because the tools are inadequate.

But because the compliance bar is significantly higher than what automated generation alone can achieve.

The ceiling nobody tells you about

This is the most important thing we discovered while building this validator, and it’s something neither vendors nor regulators clearly articulate.

Of the 21 minimum fields defined by CERT-In, only 14 can be generated automatically by SBOM tools.

The remaining 7 require deliberate human input.

Here’s where automation stops:

- Vulnerabilities — not part of SBOM generation; requires external scanners like Grype, Trivy, or OWASP Dependency-Check

- Patch status — updated by DevSecOps teams after each remediation cycle

- Release date — fetched from package registries and explicitly added

- End-of-life date — sourced from databases like end-of-life.date and enriched manually

- Criticality — derived from business context and asset risk assessment

- Usage restrictions — requires legal, licensing, and export control review

- Comments and notes — internal annotations, exceptions, and risk acceptance

This leads to an uncomfortable but unavoidable truth: the maximum compliance scores any auto-generated SBOM can achieve is ~55%. The remaining 45% is intentional; it represents organizational context and accountability that regulators explicitly expect, and that no tool can infer on its own. And yet, no existing SBOM compliance product makes this clear.

Most tools either: ignore these hard fields entirely, or report a “compliance score” without explaining why 100% is structurally impossible through automation alone.

That blind spot is exactly where most organizations think they are compliant when in reality they are only halfway there.

What we built: the SBOM compliance auditor

We built a tool that fills the gap the market has ignored: it takes any CycloneDX JSON SBOM and evaluates it field by field against CERT-In’s minimum requirements, with complete transparency on what is missing, why, and how to fix it.

Here’s what sets it apart:

Field-by-field CERT-In compliance scoring

Every one of the 21 mandatory CERT-In fields is checked individually. Each field receives one of three statuses: Present, Partial, or Missing. For each finding, the tool provides:

- A plain English explanation

- Exact CycloneDX field mapping

- Concrete recommendations to remediate gaps

The dashboard presents:

- An overall compliance score

- A category breakdown across the five CERT-In field groups

- A clear ceiling indicator showing the maximum achievable score for this SBOM given its tooling origin

Smart inference from existing data

Some SBOMs partially satisfy CERT-In requirements without explicitly populating all fields. For example:

- A CycloneDX SBOM with

type=jarin every PURL implies ZIP-based archives, allowing the tool to infer the archive property - A component with a VCS URL pointing to GitHub clearly indicates open-source origin

Our validator recognizes these inferences, credits them where appropriate, and flags where explicit declarations would strengthen compliance.

Why this matters

The primary beneficiary is the organization receiving the SBOM, not the vendor generating it.

Example: A bank procures core banking software. The vendor delivers a CycloneDX JSON SBOM listing 340 components. The procurement team ticks “SBOM received.” Done.

Reality:

- The file scores 38% against CERT-In fields

- Vulnerabilities are unmapped

- Criticality isn’t assessed

- End-of-life dates missing for 12 outdated components

- PURL format is incomplete, preventing CVE correlation

None of this is visible in a text editor or via a format validator. You need a compliance auditor.

Upload the vendor’s SBOM, read the compliance report, and you instantly see actionable questions to ask before signing a contract.

The broader regulatory picture

India isn’t alone in demanding SBOM quality it’s just the most prescriptive so far.

- US EO 14028 mandates SBOMs for software sold to federal agencies

- EU Cyber Resilience Act requires SBOMs in product conformity assessments

- FDA cybersecurity guidance makes SBOMs a pre-market submission requirement for medical devices

- RBI Advisory 11/2024 extends SBOM requirements into the banking sector

All these frameworks share a belief: an SBOM is only as valuable as the information it contains. A list of names without versions, hashes, or vulnerabilities is not a true software bill of materials it’s just a list.

CERT-In’s 21 fields enforce the difference. Organizations ignoring this will face increasing scrutiny. The question isn’t whether SBOM auditing becomes standard; it’s whether you adopt it before a supplychain incident forces the issue.